|

The only B2B auth solution built for AI-native companies (Sponsor)

Multi-tenant auth has different requirements. Make sure you’re meeting them, whether you’re onboarding humans or AI agents.

With PropelAuth, you get:

MCP support

Organizations

Roles & permissions

Enterprise SSO (SAML/OIDC)

Custom security policies

Don’t get bogged down fighting with subpar auth tools - try PropelAuth today.

Google, Microsoft, Meta, Amazon, and OpenAI unveiled this week a landmark anti-scam accord, a voluntary industry pact designed to dismantle the sprawling networks of online fraud plaguing users worldwide.

The Tech Giants committed to shared threat intelligence, coordinated investigations, and cutting-edge detection mechanisms to bridge the gaps that scammers exploit across social media, search engines, messaging apps, and payment gateways.

With global scam losses topping $1.2 trillion in 2025 (per Chainalysis), and AI lowering the entry barrier for cybercriminals, the accord reflects a seismic shift where fraud is no longer a per-platform nuisance but a systemic, interconnected crisis demanding collective firepower.

For tech professionals—from product managers optimizing user trust to engineers scaling ML defenses—this pact could mean adding cross-platform scam intelligence as a core infrastructure layer.

Let’s unpack its mechanics, motivations, limitations, and what it means for the AI arms race ahead.

The Evolution of Cross-Platform Threats

Online scams have metastasized. A decade ago, fraud was mostly contained: phishing emails stayed in inboxes, pump-and-dump schemes festered on niche forums.

Today, attackers orchestrate symphony-like operations across ecosystems. Picture this: A deepfake video drops on Meta’s Facebook, luring victims to a fraudulent Telegram group, where AI-generated chatbots extract credentials, funneled finally to Amazon Pay or crypto wallets.

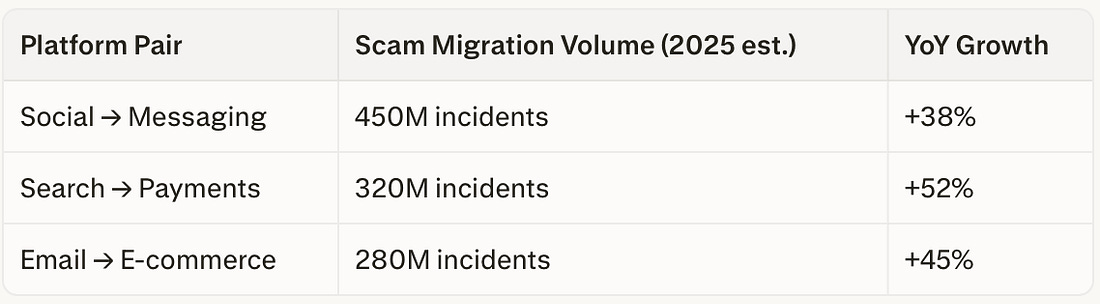

Data underscores the urgency:

These flows expose enforcement silos. Google’s Safe Browsing blocks 150M malicious sites daily, yet scammers pivot to Meta’s ecosystem in hours. Independent defenses—rule-based filters or basic ML classifiers—fail against adaptive networks using VPNs, proxies, and ephemeral domains.

The accord’s fix? A shared intelligence fabric, likely built on APIs akin to Microsoft’s Threat Intelligence Exchange, enabling real-time signal propagation. For engineers, this means federating graph databases to map scam constellations, where nodes represent actors and edges denote cross-app behaviors.

AI: The Great Equalizer for Cybercriminals

Artificial intelligence has democratized deception, turning solo hackers into scalable operations. Generative models like those from OpenAI (ironically a signatory) now spit out convincing phishing lures in seconds. Consider the anatomy of an AI scam:

Synthetic Content Generation: LLMs craft hyper-personalized emails using scraped social data—”John, your Colombian bank alert awaits.”

Deepfake Escalation: Tools clone voices for robocalls, with success rates hitting 30% in blind tests (UC Berkeley study).

Automated Scaling: Reinforcement learning agents test variants across platforms, optimizing for click-through like A/B experiments gone rogue.

Barriers plummeted: What cost $10K in manpower now runs on a $20/month API. FTC data shows AI-linked scams surged 67% YoY, reframing fraud as a computational challenge. Defenders counter with adaptive systems—think ensemble models blending transformers for NLP phishing detection (P(phish)=σ(W⋅embed(text)+b)P(phish)=σ(W⋅embed(text)+b)) and graph NNs for network analysis. The accord accelerates this via pooled datasets, potentially unlocking multimodal AI that fuses text, voice, and behavioral signals for 95%+ accuracy.

Yet, irony abounds. Signatories’ own tech powers attackers. OpenAI’s voluntary safeguards (e.g., usage policies) proved porous, as jailbroken models flood dark web markets.

A Voluntary Framework

No penalties, no central enforcer—just goodwill. The pact outlines three pillars:

Shared Intelligence: Anonymized feeds on IOCs (indicators of compromise) like IP clusters or behavioral fingerprints.

Coordinated Probes: Joint task forces, echoing law enforcement’s J-CAT model.

Detection Harmonization: Standardized ML benchmarks for fraud scoring.

Promising, but history tempers optimism. The 2018 Tech Against Terrorism coalition curbed 80% of ISIS content but faltered on enforcement variance. Similarly, the Global Internet Forum to Counter Terrorism (GIFCT) hashed millions of videos—yet non-signatories diluted impact. Without KPIs (e.g., “reduce cross-platform conversions by 20% in 12 months”), the accord risks becoming a checkbox.